Current Projects

iNNk: A Deep Learning Game

iNNK, a multiplayer drawing game where human players team up against a neural network (NN). The players need to successfully communicate a secret code word to each other through drawings, without being deciphered by the NN. With this game, we aim to foster a playful environment where players can, in a small but crucial way, go from passive consumers of NN applications to creative thinkers and critical challengers.

Learn more >>

eXplainable AI for Social Bias Detection

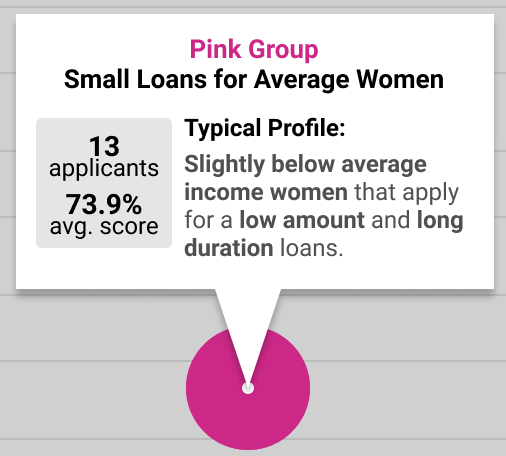

AI algorithms are not immune to biases. Traditionally, non-experts have little control in uncovering potential social bias (e.g., gender bias) in the algorithms that may impact their lives. With the wide use of deep learning, the issues of interpretability, fairness and trust become more pressing.

In this project, we design interactive visualization tools to help non-experts understand key aspects of AI systems and reveal biases. Currently, we are focusing on developing an interactive visualization for non-experts to explore a semantic Neural Network’s (NN) decisions, in the context of profiling models for loan applications, to reveal potential bias.

Learn more >>

Open Player and Community Modeling as a Learning Tool

Personalized learning is an active research area in intelligent tutoring systems and game-based learning. In most personalized systems, the learner does not know how the game categorizes them and why the game changes. We attempt to not only make this information accessible, but also use it to encourage reflection and better learning.

Learn more >>

Balancing Individual and Group Needs in Personalized Adaptive Systems for Improved Health

Balancing Individual and Group Needs in Personalized Adaptive Systems for Improved Health

This project investigates how to increase and sustain physical activity using personalized adaptive social exergames. Two thirds of the adult population in the U.S. are affected by overweight and obesity, with sedentary behavior as a primary cause. In addition to address a public health issue, the technology developed in this project will advance theories in human behavior science. The empirical data generated from the planned system can shed light on the dynamic nature of people’s social comparison process and reactions. The approach is innovative in bootstrapping design theory, algorithmic innovation, and health behavior science in a synergistic way to make scientific advancement.

Learn more >>

Improving VUI Discoverability: DiscoverCal

Improving VUI Discoverability: DiscoverCal

Voice User Interfaces (VUIs) are becoming prevalent. However, the “invisible nature” of VUIs make it difficult for users to learn how to use these systems and to build the appropriate mental models for the affordances and limitations of each systems. In this project, we aim to explore how to design and develop a personalized and adaptive multi-modal adaptive discover tool to help users learn how to use the system.

Learn more >>

Past Projects

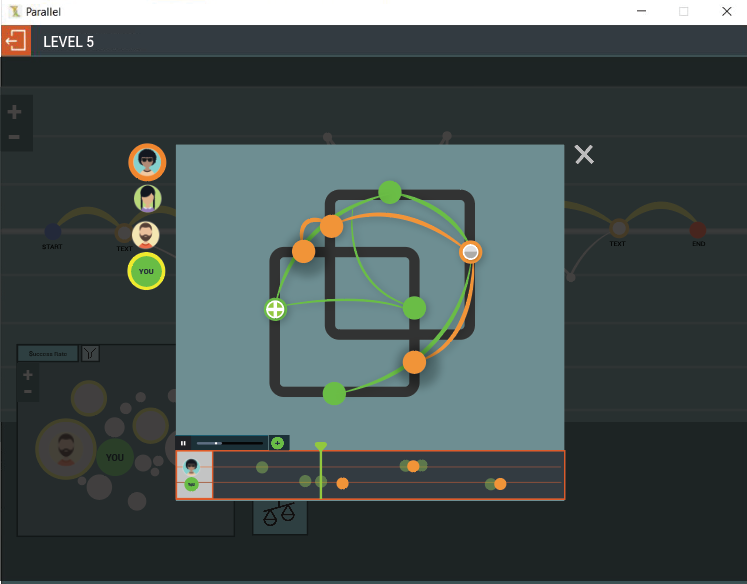

Learning Parallel Programming Concepts Through an Adaptive Game

Modern computing is increasingly handled in a parallel fashion and despite the growing body of work on how to teach parallel programming, little is understood about the learning of this subject. This project will shed light on the challenge of learning parallel programming and gather initial data on ways to scaffold it in college-level courses. We propose to develop a genre of adaptive learning games in which we will gather data on how experts and novices address parallel programming problems and study ways to scaffold learning.

Learn more >>

TakeControl VR

TakeControl VR

In this project, we explore virtual reality (VR)-based inhibitory control training with binge eating patients in a personalized and adaptive way. This project is in collaboration with the WELL Center at Drexel University.

Learn more >>

Mirrors of Grimaldi

Mirrors of Grimaldi

Mirrors of Grimaldi is an experimental local multiplayer splitscreen game where your health is represented by the size of your screen. Players will be pitted against each other as Grimaldi’s Interdimensional Demonic Carnival invades the same medieval town in parallel timelines. As swarms of demonic minions attack the players, their screens will shrink and eventually crush them, knocking them out of the game. The last surviving player is declared the winner and is allowed to fight another day.

Learn more >>

TAEMILE: Towards Automating Experience Management in Interactive Learning Environment

TAEMILE: Towards Automating Experience Management in Interactive Learning Environment

A key challenge for interactive learning environments is how to automatically co-regulate, balancing learners’ autonomy and the pedagogical processes intended by educators. In order to achieve personalized and dynamic co-regulation, we explore the use of artificial intelligence (AI) techniques of experience management (EM) in combination with a play-based pedagogical model. As a first step, this NSF-funded exploratory project seeks to collect preliminary data about 1) the relationship between a learner’s achievement goal orientations (learning orientation) and play style, and 2) the impact of dynamically adjusting the learning environment using EM on learner’s autonomy and learning outcomes.

Learn more >>

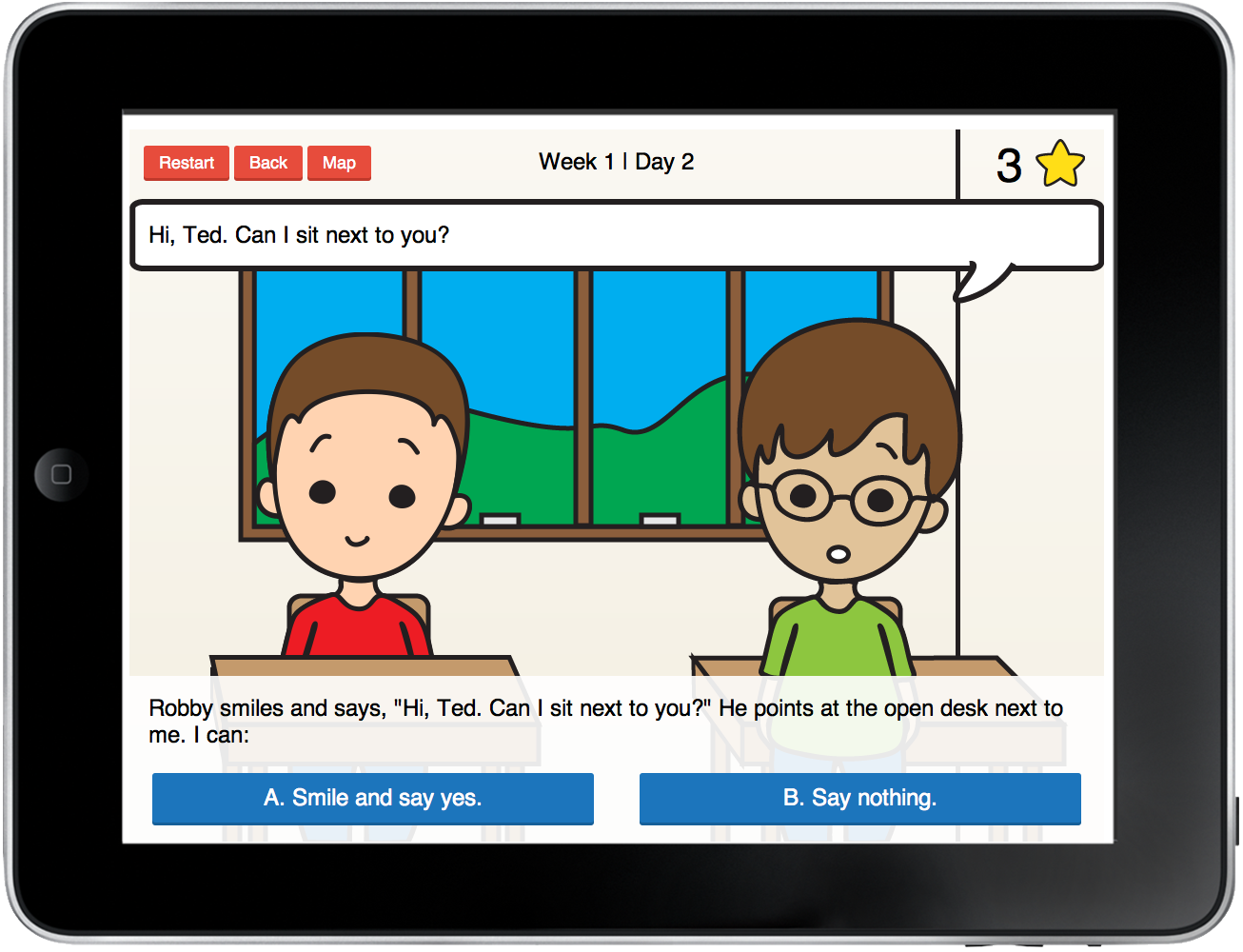

FriendStar: Interactive Social Stories for Children with Autism

FriendStar: Interactive Social Stories for Children with Autism

This project develops Interactive Social Stories (ISS), an approach that expands the existing intervention of Social Stories with interactive narrative techniques. Our goal is to use ISS to help children with ASD to learn social skills more effectively.

Learn more >>

Variable Space

Variable Space is a collaborative project that seeks to better define our individual perceptions of space. With a shared understanding that space consists of both our physical surroundings and also intangibles, such as time and emotion, our intent is to develop an investigative process that reveals both the commonality and anomaly in interpretation.

The project employs language as its catalyst and movement as its medium. Trained dancers will react to prompts, which will vary in style from lyrical to instructional to narrative. Through motion capture and digital modeling of the dancers’ movements, defining variables will be studied in greater detail. Because this investigation is both scientific and aesthetic, data and findings will be analyzed and presented through a range of methods and visualizations. Variable Space is a collaboration between Drexel faculty: Valerie Fox, PhD (English and Philosophy), Jacklynn Niemiec (Architecture and Interiors), Leah Stein (Dance), and Jichen Zhu, PhD (Digital Media). The collaboration is funded through the ExCITe Center Seed Grant (Class of 2014).